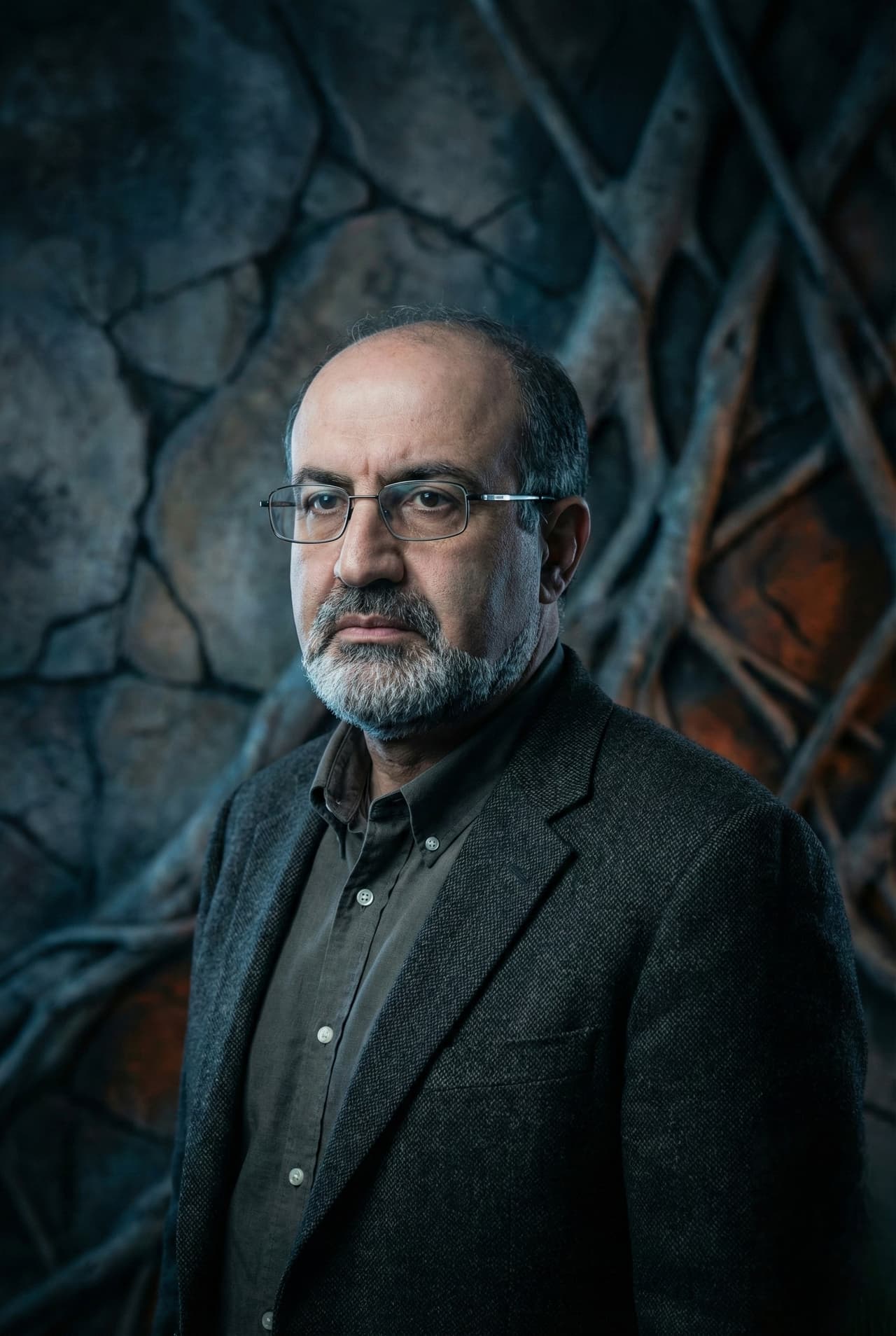

Nassim Nicholas Taleb

Systems fall into three distinct categories based on their response to disorder, volatility, and stress. Fragile systems suffer harm from unexpected shocks. Robust systems withstand stress but remain unchanged. Antifragile systems actually gain strength, grow, and improve when exposed to randomness and volatility. Biological systems naturally exhibit antifragility because they adapt and evolve through minor stressors, whereas artificially constructed systems often shatter when unpredictable events occur.

The distinction between the fragile, robust, and antifragile is illustrated by three mythological figures. Damocles dined with a sword dangling by a single hair above his head, representing pure fragility where any sudden change guarantees destruction. The Phoenix represents robustness because it is repeatedly consumed by fire but always returns in the exact same form. The Hydra embodies antifragility because severing one of its heads causes two new ones to grow back, making the creature stronger in response to a direct attack.

Modern society attempts to eliminate volatility through overprotective policies and complex regulations. This suppression of natural fluctuations stems from naive rationalism, an ideology that falsely assumes the world is completely understandable and controllable. By actively smoothing out natural jaggedness and artificially suppressing small stressors, modern institutions allow invisible risks to accumulate. This accumulated vulnerability eventually erupts into massive, catastrophic failures rather than harmless, incremental corrections.

Depriving an organism of stress directly causes it to become fragile. Bones lose density when not subjected to physical strain, and immune systems weaken without exposure to pathogens. The principle of hormesis dictates that small doses of harmful substances or stressors stimulate an organism to increase its resistance. Frequent, low impact disruptions serve as vital information that allows complex systems to continually recalibrate and fortify themselves against larger future shocks.

The barbell strategy achieves antifragility by combining two extreme approaches while entirely avoiding the moderate middle. An individual applies this by allocating the vast majority of resources into hyper conservative, risk free investments and dedicating a small fraction to highly speculative bets with massive potential payouts. This bimodal approach caps potential losses at a negligible level while exposing the system to unlimited upside from positive random events. The extreme safety protects against catastrophic ruin while the extreme risk captures the benefits of unpredictable volatility.

Knowledge grows much more reliably through subtraction than through addition. We understand what is definitively wrong with far greater certainty than we understand what is right, meaning negative knowledge resists error better than positive knowledge. Improvement achieved by removing harmful elements yields more robust results than attempting to add beneficial ones. Curing an illness by eliminating a toxic diet provides a more durable health outcome than prescribing complex experimental medications.

Iatrogenics refers to the net damage caused by a healer or an intervening agent when the treatment inflicts more harm than the original affliction. Interventions in highly complex systems frequently trigger unforeseen secondary consequences that outstrip any immediate benefits. This risk peaks when authorities intervene in minor problems that naturally self correct, transforming mild inconveniences into severe systemic crises. Action should be strictly reserved for dire emergencies where the potential payoff of intervention massively outweighs the hidden risks of tampering with a functioning system.

A system becomes fatally fragile when the individuals making decisions do not bear the consequences of their failures. True accountability requires that opinion makers, bankers, and politicians face direct personal ruin if their strategies or forecasts cause harm to others. This alignment of risk and reward naturally filters out reckless behavior and poor decision making. Without personal stakes, managers aggressively hide risks to collect immediate bonuses, effectively transferring fragility from themselves onto the broader society.

The life expectancy of nonperishable items and ideas increases with every day they survive. A technology or piece of literature that has endured for a century will likely survive for another century. Modern consumers suffer from neomania, an irrational obsession with the newest trends and technologies, blinding them to the inherent fragility of untested innovations. Time acts as the ultimate filter for robustness, ruthlessly discarding fragile concepts while preserving heuristics and traditions that naturally resist disorder.

Optionality grants the right but not the obligation to take a specific action, allowing a system to capture massive gains from favorable outcomes while limiting losses from unfavorable ones. Trial and error relies heavily on this principle. Small, contained failures provide critical data and allow tinkerers to discard bad ideas at a low cost. Grand theoretical plans regularly fail because they demand absolute predictive accuracy, whereas constant tinkering naturally gravitates toward success by systematically exploiting positive randomness.

Intellectuals routinely mistake the visibility of certain knowledge for its actual necessity in practical applications. A commodity trader might build an immense fortune trading green lumber while incorrectly believing the wood is painted green rather than simply freshly cut. The trader succeeds entirely through an intuitive grasp of order flow and market dynamics, not through an academic understanding of the underlying physical product. Focusing on articulate narratives and theoretical models causes observers to overlook the uncodified, practical expertise that actually drives survival and success.